Zero-Annotation Segmentation via YOLO-Guided SAM Prompt Automation

A fully automated plant segmentation pipeline that combines YOLO detection with a two-stage SAM prompt selection strategy, outperforming supervised baselines while requiring zero manual labels.

Manual segmentation labels are expensive to obtain in large field trials. This project develops an annotation-free segmentation pipeline that leverages YOLO detections to generate structured prompts for the Segment Anything Model (SAM), enabling scalable, high-quality plant segmentation without human annotations.

Methodology

The pipeline turns bounding boxes into effective prompts through a two-stage selection process:

- YOLO-based object localization. Use YOLO to detect plants and produce bounding boxes as initial regions of interest for segmentation.

- Two-stage prompt selection. Within each box, automatically select point or box prompts for SAM in two stages, refining from coarse region proposals to more precise seeds.

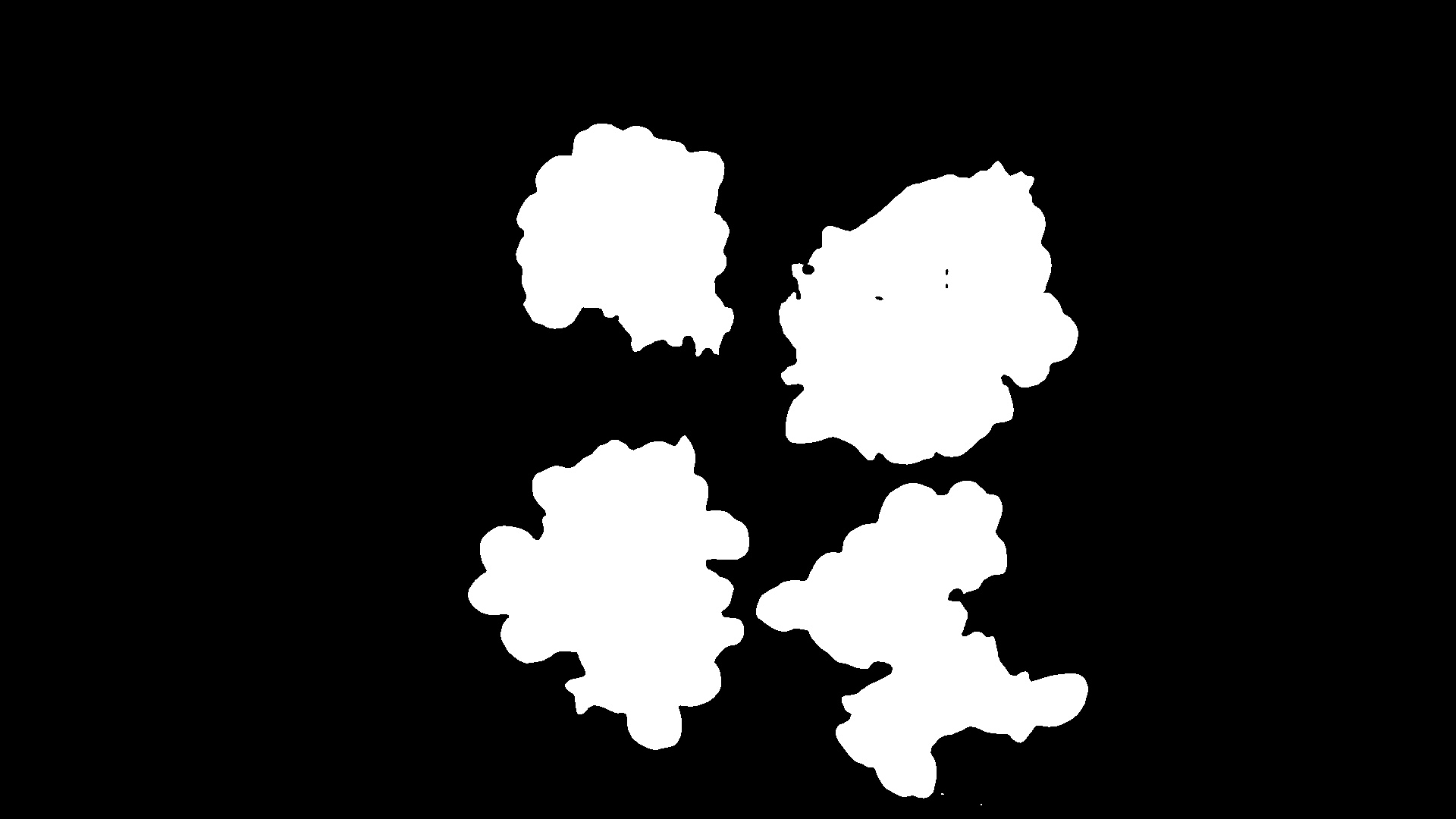

- SAM-driven segmentation. Run SAM with the automatically generated prompts to obtain high-quality plant masks without any manual labeling.

- Post-processing for consistency. Apply simple geometric and morphological filters to enforce mask consistency across frames or plots when needed.

Results & Impact

The zero-annotation segmentation pipeline achieves about 9.2% IoU improvement over strong supervised baselines trained on manually labeled data, while removing the labeling bottleneck entirely.

This work illustrates how foundation models like SAM, when paired with task-specific detection and prompt engineering, can replace expensive human annotation in agricultural computer vision.