Lightweight Detection with Mamba and Frequency-Domain Channel Mixing

A specialized detection model for crop ripeness staging that integrates Mamba-based state space modeling with frequency-domain channel mixing to approach YOLOv12-L performance with a fraction of the parameters and computation.

This project targets efficient object detection for in-field fruit and ripeness detection under resource constraints. We design a lightweight detection model that incorporates a Mamba block and a frequency-domain channel mixer, achieving strong accuracy with significantly reduced model size and FLOPs compared with a large YOLOv12-L baseline.

Methodology

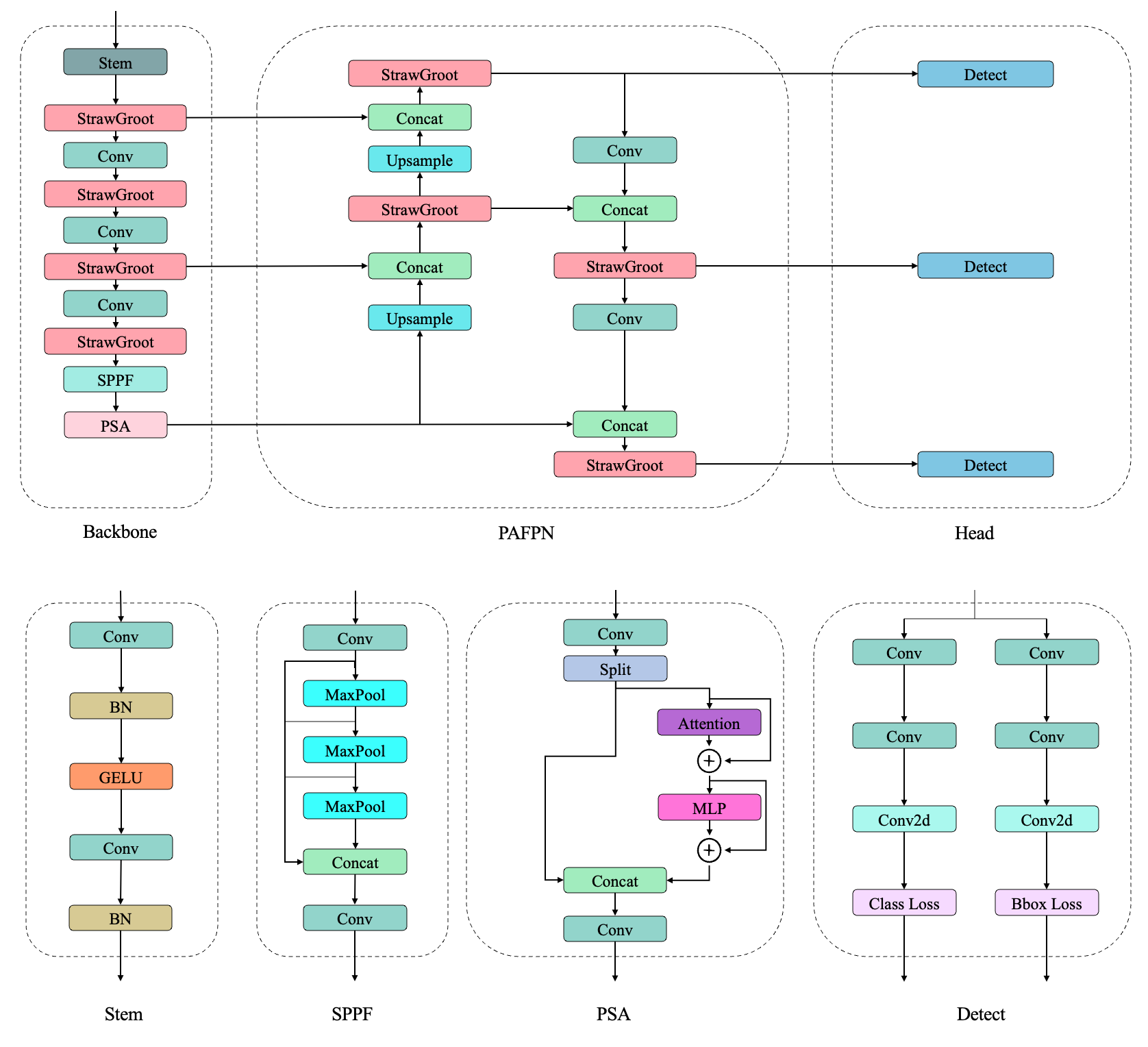

The architecture focuses on balancing accuracy and efficiency:

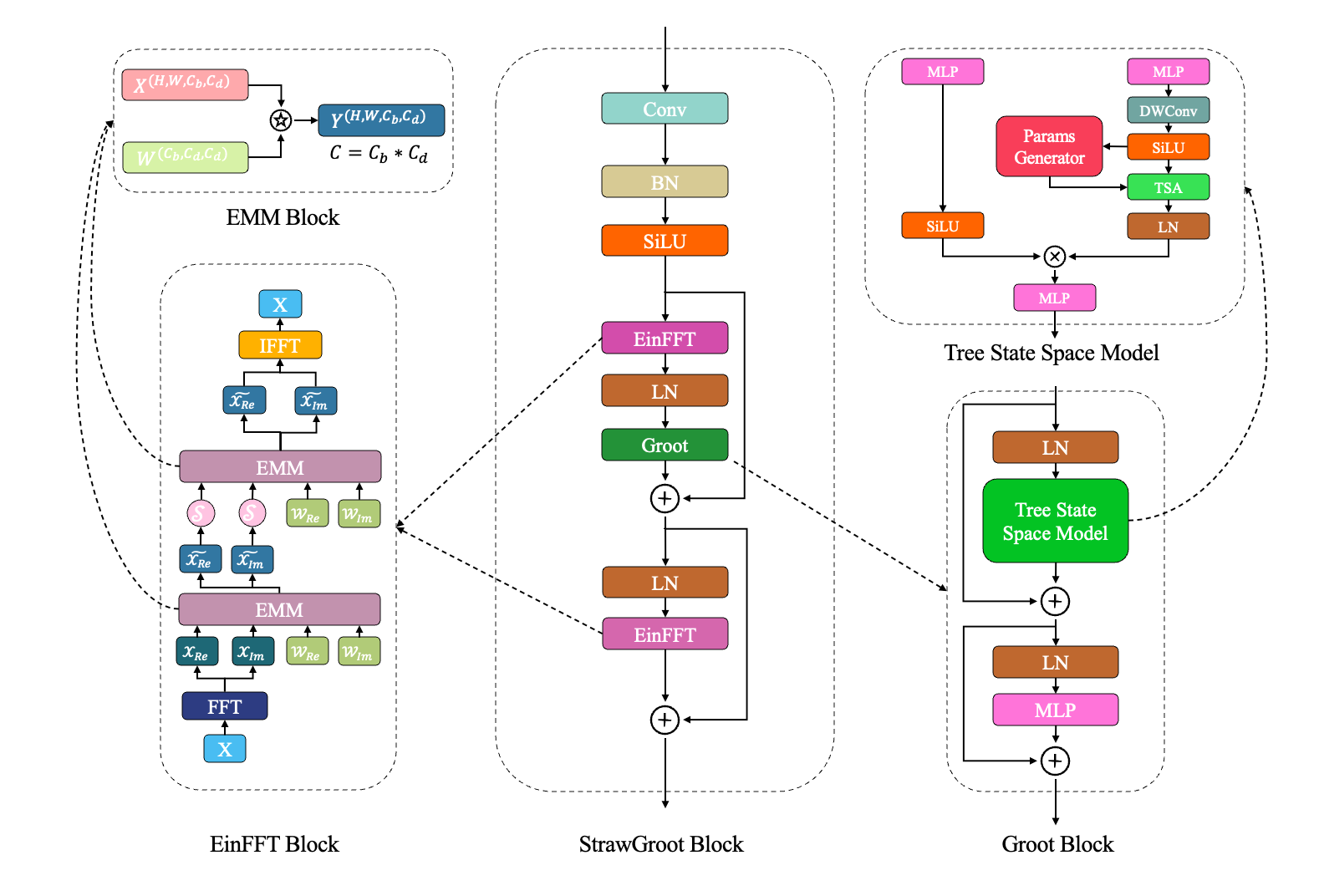

- Mamba-based state space block. Replace parts of the convolutional backbone or neck with a Mamba block to capture long-range dependencies in a parameter- and compute-efficient way.

- Frequency-domain channel mixing. Apply frequency-domain transforms to feature maps and perform channel mixing to emphasize informative bands related to fruit texture and boundaries.

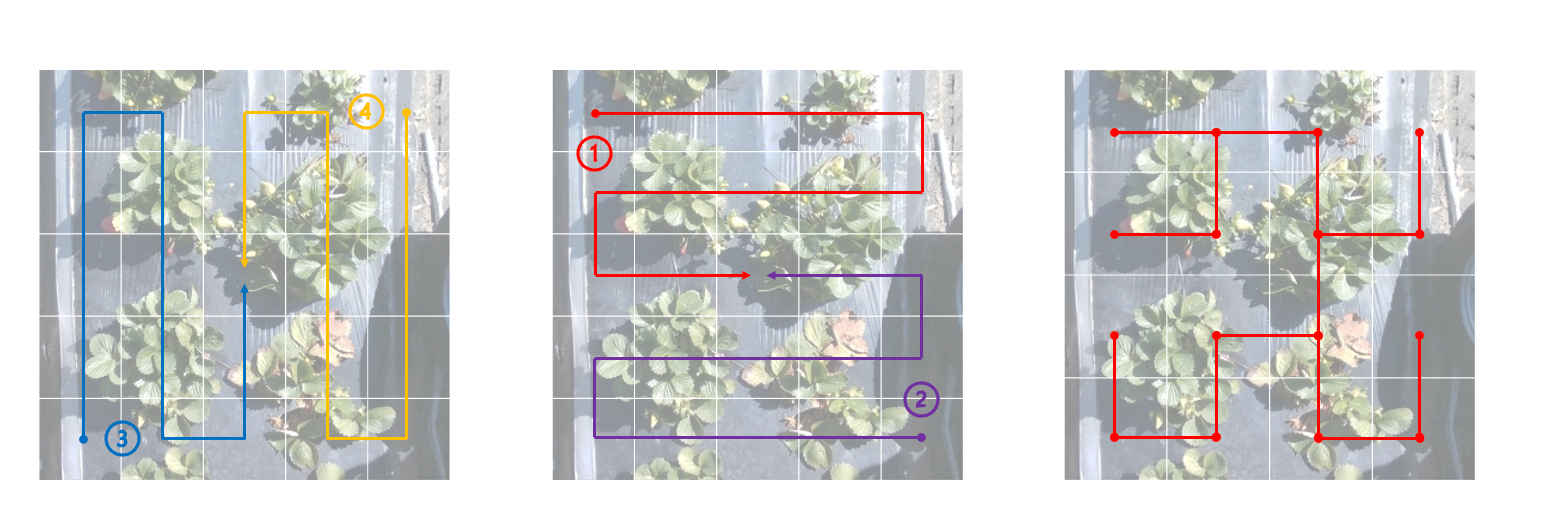

- Task-specific training. Train the model for crop ripeness staging in real fields, with augmentations tailored to illumination changes, occlusion, and clutter.

- Efficiency-aware evaluation. Measure both detection accuracy and resource usage against YOLOv12-L to quantify the trade-offs.

Results & Impact

The proposed model reaches about 93% of YOLOv12-L’s performance while using roughly 17% fewer parameters and 46% less computation, making it well-suited for deployment on edge devices or field robots.

This work demonstrates that combining modern sequence modeling (Mamba) with frequency-domain reasoning can yield compact yet powerful detectors for agricultural applications.