Multimodal Yield Forecasting with Vision–Weather–Growth Fusion

A multimodal time-series model that integrates vision, weather, phenological growth dynamics, and spatial layout encoding to produce accurate and robust yield forecasts for precision agriculture.

Yield is driven by a combination of plant status, environmental conditions, and spatial management. In this project, we build a multimodal forecasting model that combines image-derived features, weather data, growth-stage information, and spatial layout representations to predict yield in strawberry production.

Methodology

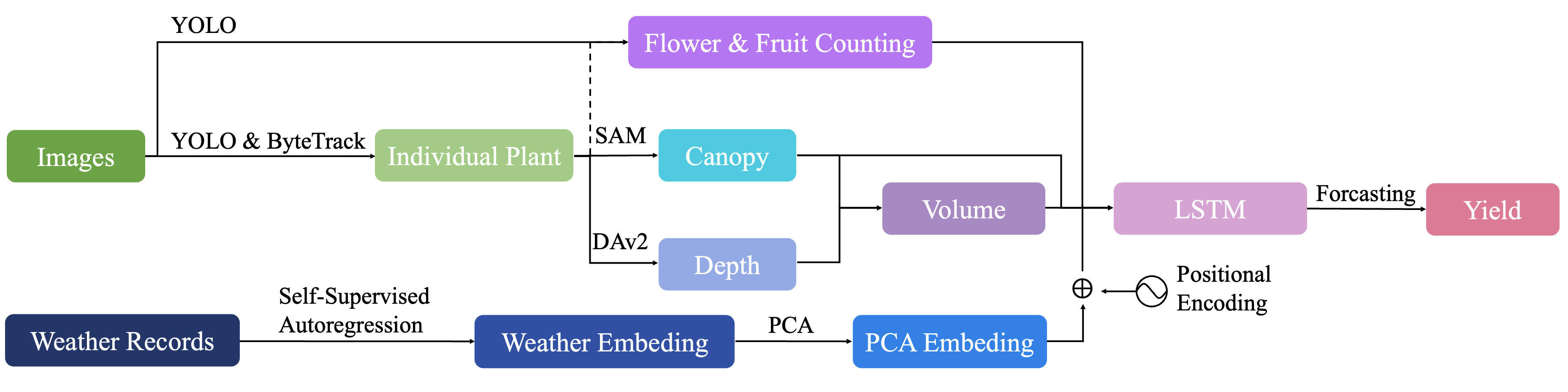

The architecture treats yield prediction as a multimodal sequence modeling problem:

- Vision features. Extract visual features from field imagery that capture canopy size, density, and health.

- Weather and environment. Integrate historical and current weather features such as temperature and precipitation to model environmental drivers of yield.

- Phenological dynamics. Encode growth-stage and phenological information to provide context on where plants are in their development cycles.

- Spatial layout encoding. Incorporate spatial configuration of plots or rows to capture management patterns and local interactions.

- Multimodal fusion. Fuse all modalities in a time-series model that outputs yield forecasts with calibrated uncertainty for decision support.

Results & Impact

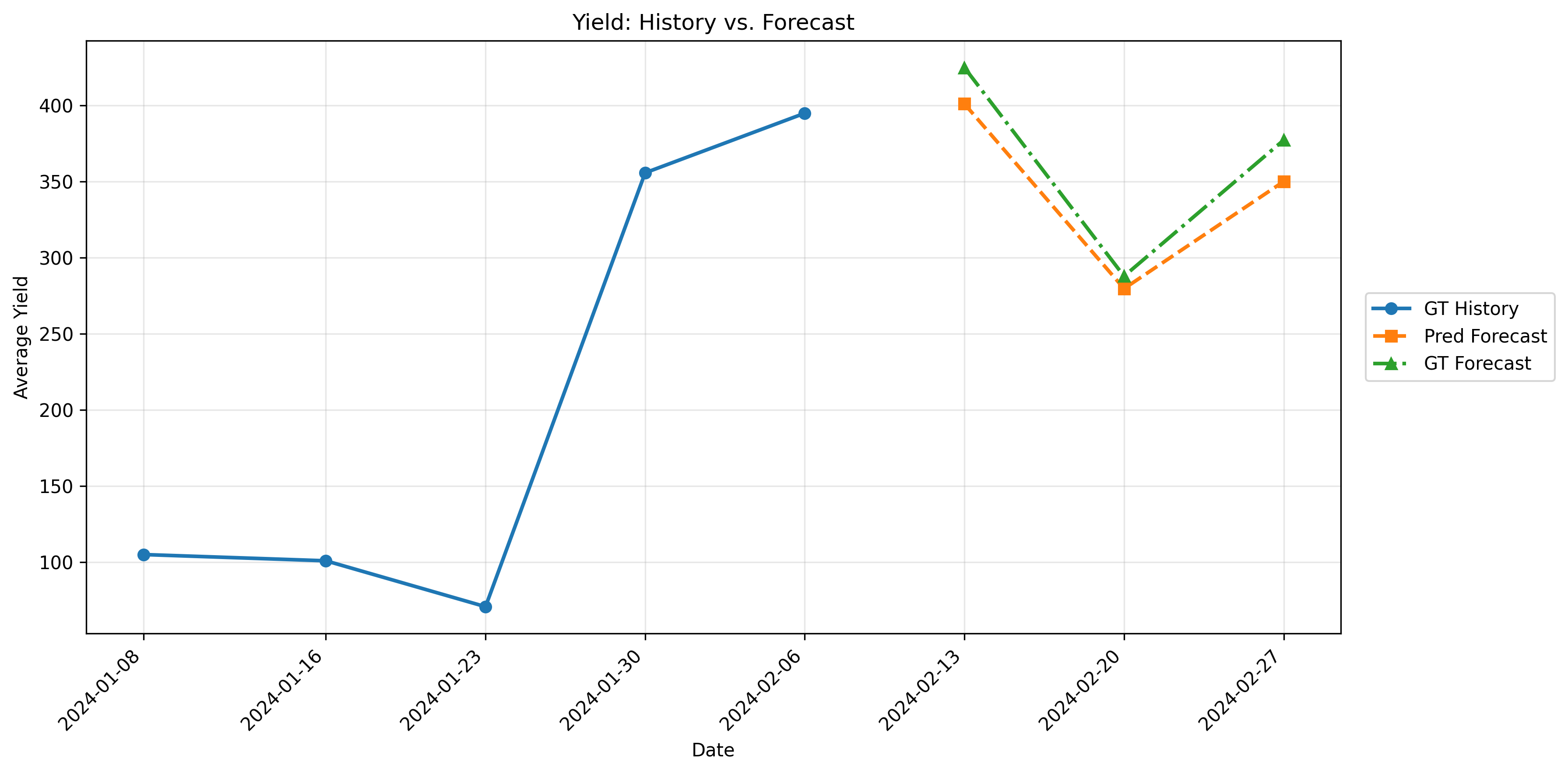

The multimodal model improves yield forecasting accuracy and stability compared with unimodal, vision-only baselines, especially under cross-season evaluation.

This work underscores the importance of combining visual, environmental, temporal, and spatial cues when building predictive models for precision agriculture.