IR/RGB Contrastive Fusion for Robust Automatic Target Recognition

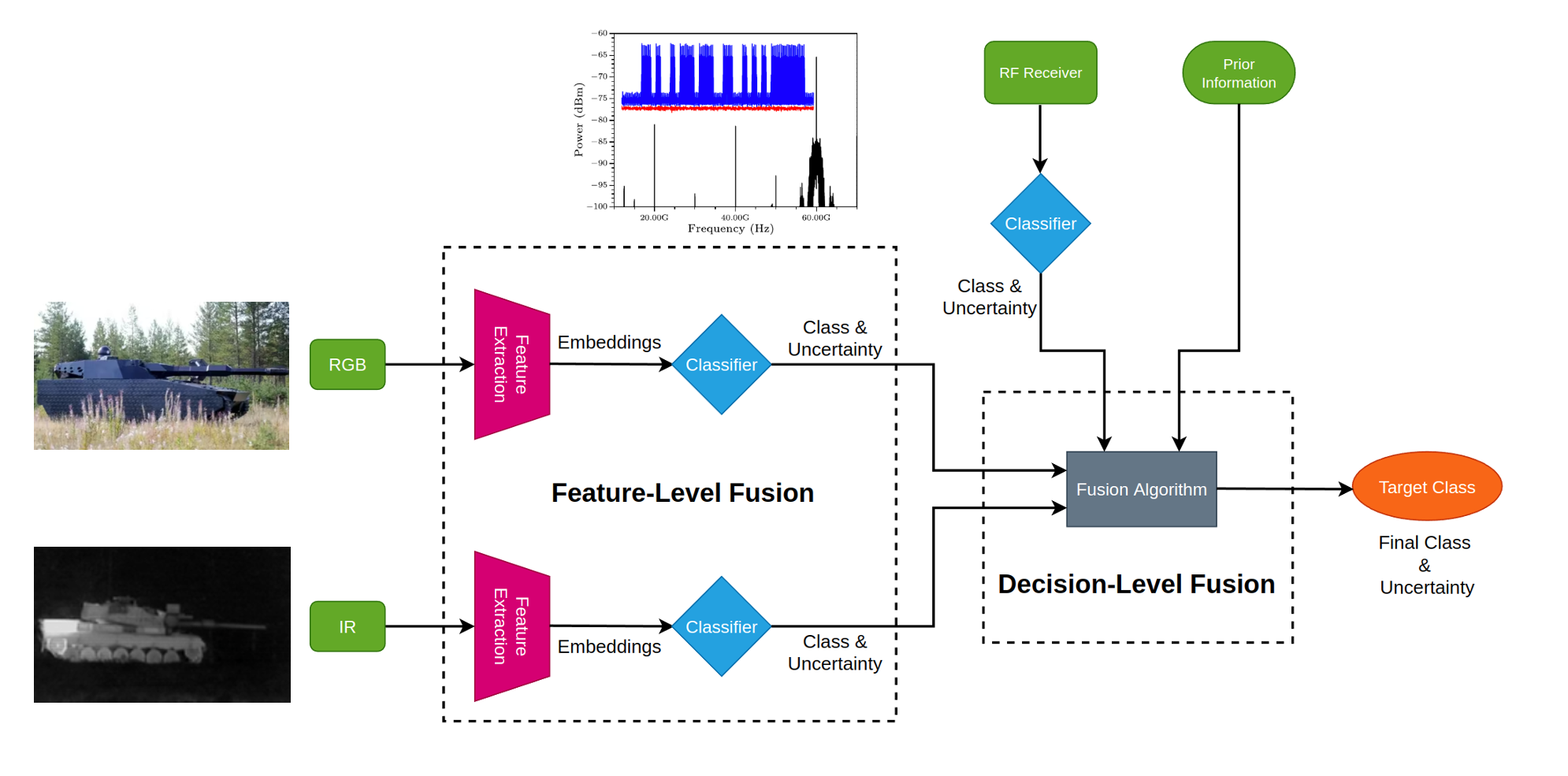

A multimodal automatic target recognition system that uses frozen DINOv2 backbones for IR and RGB, contrastive learning for embedding alignment, and Bayesian decision fusion for robust classification.

Automatic target recognition (ATR) benefits from combining infrared and RGB sensing, but fusing the modalities effectively is non-trivial. In this project, we build an ATR pipeline that learns IR and RGB embeddings via contrastive learning on top of frozen DINOv2 backbones, and then fuses classifier outputs with a Bayesian network for robust decisions.

Methodology

The design separates representation learning, classification, and decision fusion:

- Frozen DINOv2 backbones. Use separate, frozen DINOv2 encoders for IR and RGB inputs to obtain strong, pretrained feature representations for each modality.

- Projection heads and contrastive loss. Attach lightweight projection heads to each backbone and train them with contrastive loss so that corresponding IR and RGB views of the same target are drawn together in embedding space.

- Modal-specific classifiers. Train independent IR and RGB classifiers on top of the learned embeddings to output per-modality class probabilities.

- Bayesian decision fusion. Use a Bayesian network to fuse the classifier outputs, producing a single, robust ATR decision that exploits complementary strengths of both modalities.

Results & Impact

The contrastive fusion approach demonstrates measurable gains in classification performance and robustness, particularly in conditions where one modality alone is unreliable.

This project showcases how frozen foundation models, contrastive learning, and probabilistic fusion can be combined into a practical multimodal ATR system.

Read the detailed report: Multimodal ATR Technical Report (PDF).